Standard imaging sensors have limited dynamic range and hence are sensitive to only a part of the illumination range present in a natural scene. The dynamic range can be improved by acquiring multiple images of the same scene under different exposure settings and then combining them. We have developed a multi-sensor camera design, called Split-Aperture Camera, to acquire registered, multiple images of a scene, at different exposure, from a single viewpoint, and at video-rate. The resulting multiple exposure images are then used to construct a high dynamic range image.

There are three main steps to composing the high dynamic range image. First, we transform the recorded intensities by each sensor into the actual sensor irradiance values. This mapping can be obtained using radiometric calibration techniques applicable to normal cameras. Second, since the irradiance at corresponding points on different sensors can be different, we need a correction factor to represent a scene point by a unique value independent of the sensor where it gets imaged. This factor is spatially variant and it is different for different sensors. The third and the last step is fusing the intensity transformed images into a single high dynamic range mosaic. For every pixel on a canvas (an empty image of same dimensions as any of the sensors), we have a set of transformed intensity values one from each of the images. We discard the values from images in which those locations were either saturated or clipped. Since, the values not discarded may be noisy, we combine them to obtain the final value.

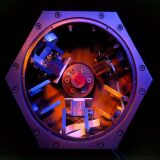

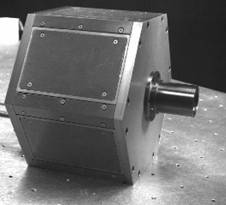

To build a prototype, we used a pyramid beam-splitter which is the corner of a mirror cube (3-face pyramid) and three sensors used were Sony monochrome board cameras CCB-ME37. The glass cube corners are commercially available and marketed as solid retroreflectors. The triangular surfaces were coated with a metallic coating such as aluminum to obtain the three desired reflective surfaces. We designed a special lens whose aperture is located just behind the lens, and aligned the pyramid with the optical axis with its tip at the center of the aperture. The position of the sensors were carefully calibrated to ensure that all the sensors were normal to the split optical axes, equidistant from the tip of the pyramid and images from all sensors overlaid exactly on top of each other. This arrangement ensures that distribution of light across the three sensors is independent of the 3D coordinates of the objects being imaged. We used thin-film neutral density filters with transmittances 1, 0.5 and 0.25 in front of the sensors to obtain images capturing different parts of the illumination range. The frame grabber used was Matrox multichannel board capable of synchronizing and capturing three channels simultaneously. Figure 1 below shows the prototype built. Figures 2 and 3 show samples of images acquired by the prototype.

Figure 1: Photograph of a prototype of the high dynamic range Split Aperture Camera

Figure 2: (a)-(c) The three images of a parking lot obtained by the high dynamic range Split Aperture Camera, employing three 8-bit sensors. Using the three filters yields three images, having brightness values in ratios 1:2:4. (d) The resulting high dynamic range image; the intensity range in this image has been compressed to 0-255 using nonlinear mapping for display purposes here.

Figure 3: A sequence of frames in a high dynamic range video sequence of a parking lot, generated by fusing the three multi-exposure input sequences at video rate.

- M. Aggarwal and N. Ahuja, Split Aperture Imaging for High Dynamic Range, Proc. International Conference on Computer Vision, Vancouver, Canada, July 2001, 10-17.

- M. Aggarwal and N. Ahuja, Split Aperture Imaging for High Dynamic Range, International Journal on Computer Vision, Vol. 58, No. 1, June 2004, 7-17.